Most developers working with large language models are still operating in what can fairly be called the 'Vibes-Based' era of prompting. The process involves tweaking adjectives, rearranging instructions, adding phrases like 'be concise' or 'think step by step,' and hoping the output meets expectations. For exploratory tasks and creative brainstorming, this approach works tolerably well. But in a production-grade CI/CD pipeline—where an agent is generating API migration scripts, database schema changes, or infrastructure-as-code templates—'mostly working' is indistinguishable from failure.

The gap between vibes-based prompting and production reliability represents one of the most significant unresolved challenges in integrating LLMs into the SDLC. Consider a deployment pipeline where an AI agent generates Terraform configuration for a new microservice. If the output is valid Terraform 95 percent of the time, the remaining 5 percent represents a deployment failure that triggers rollback procedures, pages the on-call engineer, and erodes team trust in the AI-assisted workflow. In a high-frequency pipeline processing dozens of deployments daily, a 5 percent failure rate is catastrophic.

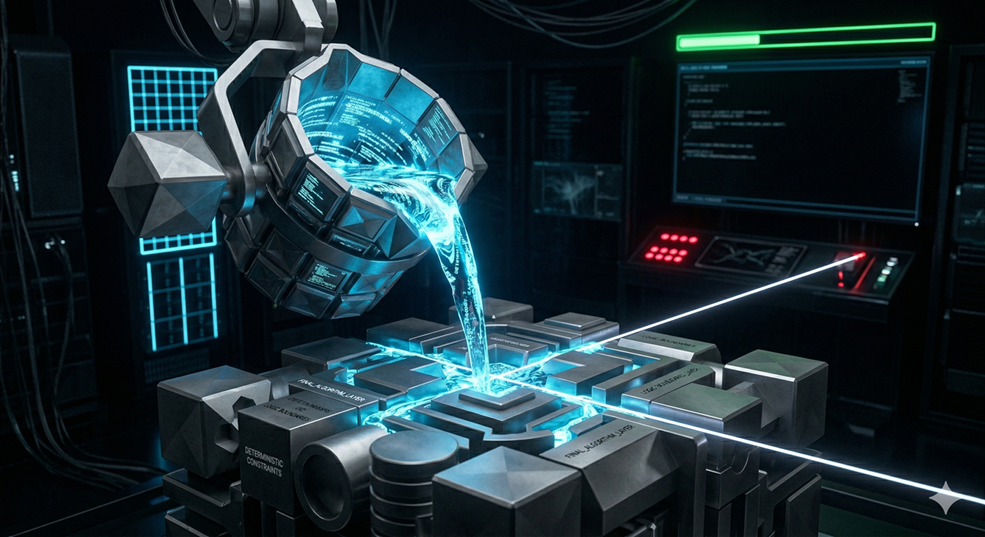

The shift toward 'Prompt Compilation' using frameworks like DSPy represents a fundamental refinement in how the SDLC can leverage LLMs. Instead of hand-crafting prompt strings—which are brittle, hard to version, and impossible to test systematically—DSPy introduces the concept of defining the 'signature' of a task. A signature describes the inputs, the expected outputs, and the type constraints. An optimizer then 'compiles' the most effective prompt by evaluating candidate prompts against a small set of ground-truth examples, selecting the formulation that maximizes accuracy.

This approach moves the discipline from prompt engineering—an art form driven by intuition and trial-and-error—to prompt programming, a systematic practice with clear inputs, measurable outputs, and reproducible optimization cycles. The SDLC refinement is immediate: prompt definitions can be version-controlled alongside application code, prompt performance can be tracked through CI metrics, and prompt regressions can be caught by automated test suites just like any other software regression.

Grammar-Constrained Decoding adds another layer of determinism that addresses a different failure mode entirely. Even when a prompt is well-optimized, the LLM's output is generated token by token through probabilistic sampling. This means that an otherwise correct JSON response might occasionally produce invalid syntax—an unclosed bracket, a trailing comma, a string field that contains unescaped quotes. Traditional workarounds involve retry loops: if the output fails JSON parsing, re-prompt the model and hope for better luck. These retry loops are expensive in both latency and API costs.

Grammar-Constrained Decoding eliminates this category of failure entirely by restricting the model's output layer to follow a formal grammar—a regex pattern, a JSON schema, or a custom BNF specification. At each token generation step, the decoder masks out any token that would violate the grammar, ensuring that the output is structurally valid by construction rather than by luck. The output may still contain semantic errors, but it will always be syntactically parseable, eliminating an entire class of integration failures from the pipeline.

The combined effect of compiled prompts and constrained decoding fundamentally changes how AI-generated artifacts can participate in the SDLC. Configuration files generated by an agent can be directly ingested by deployment tools without defensive parsing wrappers. API response schemas can be trusted to match the declared TypeScript interface. Test data can be directly loaded into a database without manual cleanup. Each of these eliminations of manual intervention represents a friction reduction that compounds across hundreds of development tasks per sprint.

The testing strategy for deterministic AI outputs evolves in meaningful ways as well. Traditional testing of LLM integrations tends toward snapshot testing—capture a 'golden' output and assert that future outputs match it character-for-character. This is fragile because semantically identical outputs may differ in whitespace or ordering. With prompt compilation, teams can define structural assertions: 'The output must be valid JSON matching this schema,' 'The generated SQL must reference only existing tables.' These structural tests are far more robust and meaningful than character-level comparisons.

As the industry matures, the distinction between vibes-based and compiled prompting will likely become as fundamental as the distinction between interpreted and compiled programming languages. Both have their place—exploratory sessions for rapid prototyping, compiled and constrained pipelines for production workloads. Teams that invest in deterministic AI infrastructure early will find that every phase of their SDLC benefits from the reliability and predictability that prompt compilation provides.