For over a decade, REST and GraphQL have dictated the flow of backend systems. Applications were strictly bound by rigid schema definitions and predefined endpoints. If the frontend needed a new aggregate view of data, a backend developer had to write a new specific middleware or endpoint. This coupling between consumer needs and backend implementation created a persistent drag on delivery velocity across every SDLC phase—from requirements gathering through deployment.

Large Language Models equipped with advanced tool-use capabilities, often referred to as Function Calling, are altering this dynamic in a fundamental way. An LLM acting as a backend orchestrator can dynamically decide which internal microservices to query, formulate dynamic SQL or GraphQL statements on the fly, and shape the resulting unstructured data into the exact format requested by the client. This eliminates an entire class of integration tickets that traditionally clogged sprint backlogs.

Consider the refinement this brings to the requirements phase of the SDLC. In a traditional pipeline, a product owner writes a specification, a backend engineer translates that into an API contract, a frontend engineer consumes that contract, and any mismatch triggers a feedback loop that can span days. When an LLM-based orchestration layer sits between these concerns, the specification itself becomes the query. The intent expressed in natural language maps directly to data retrieval and transformation, compressing multi-day handoff cycles into near-real-time iterations.

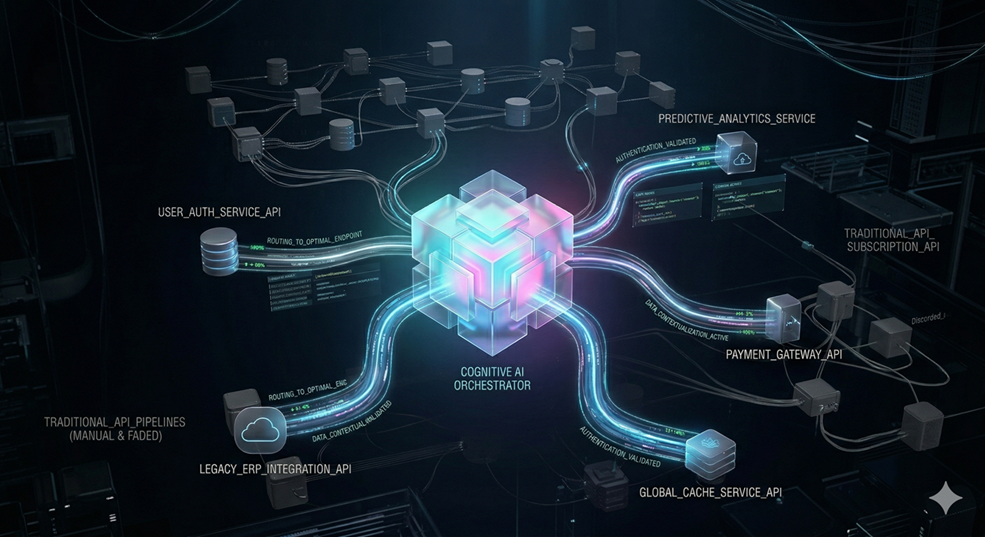

The implications for system design are equally significant. Traditional API gateway patterns—rate limiting, schema validation, request routing—remain essential for security and reliability. However, the orchestration logic that decides 'which services to call and in what order' no longer needs to be hard-coded. Instead of maintaining a sprawling collection of BFF (Backend-For-Frontend) services, each tailored to a specific client, teams can explore deploying a single cognitive orchestration layer that adapts to any consumer's needs dynamically.

This shift also redefines how teams approach integration testing within the SDLC. Traditional integration tests validate that Service A returns the correct payload when called with specific parameters. With an LLM orchestrator, the integration surface becomes intent-based: 'Given this user question, does the orchestrator select the correct services, compose the right query, and return semantically accurate data?' This elevates testing from mechanical contract validation to semantic correctness verification—a more meaningful quality signal.

One area where this concept brings particular refinement is data aggregation across microservice boundaries. Enterprise applications frequently require composite views that span inventory, billing, user profiles, and analytics. Building and maintaining dedicated aggregation endpoints for each view is notoriously expensive. An orchestration layer powered by an LLM can receive a descriptive request—such as retrieving overdue invoices for enterprise clients with active support contracts—and decompose it into the necessary queries across services, joining the results contextually without requiring endpoint-per-view engineering effort.

While traditional APIs will forever remain the secure perimeter for atomic transactions—payment processing, authentication flows, and data mutations that demand strict ACID compliance—the orchestration layer is rapidly shifting toward cognitive models. The concept of a 'Cognitive Middleware' layer, where rigid endpoints are replaced by intent-driven prompts, has the potential to vastly accelerate integration speed while reducing the maintenance burden that plagues conventional microservice architectures.

The SDLC refinement extends to deployment and observability as well. Traditional API orchestration requires careful versioning strategies—v1, v2, v3 endpoints coexisting to support backward compatibility. A cognitive orchestration layer can interpret intent regardless of underlying schema changes, provided the data semantics remain consistent. This reduces the operational overhead of managing API version matrices and simplifies rollback strategies during incident response.

For engineering teams evaluating this approach, the key consideration is not whether to replace REST or GraphQL entirely—those protocols serve critical roles in type safety and network efficiency. Rather, the question is where in the architecture rigid contracts create unnecessary friction. Every SDLC phase benefits when the integration layer can adapt to changing requirements without requiring dedicated engineering effort for each new data view.