The Shadow Logic Problem

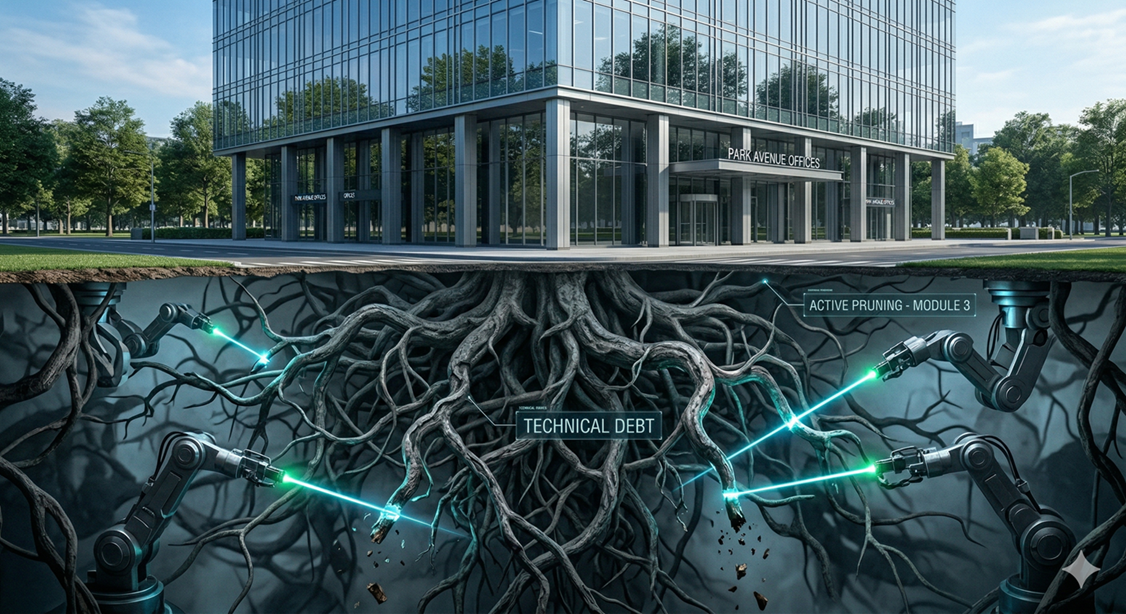

The danger of AI-generated code is not that it writes bad code in the obvious sense—syntax errors, missing imports, or broken logic that fails immediately. The real danger is far more insidious: AI writes 'convincing' code. It produces solutions that pass code review at a glance, satisfy the immediate requirements, and ship without incident. The problems only surface weeks or months later when the accumulated weight of individually reasonable but collectively harmful decisions begins to drag down the codebase. This phenomenon—what can be described as 'Shadow Logic'—represents a new category of technical debt that the SDLC has not traditionally been equipped to detect.

Shadow Logic manifests in predictable patterns. An AI agent solving an immediate ticket might introduce a direct database query in the view layer because it produces the correct output faster than routing through the established service layer. It might duplicate a utility function rather than importing the existing one because the duplicate was 'closer' in its generation context. It might hardcode a configuration value that should be environment-dependent because the hardcoded version satisfies the test suite. Each of these decisions is locally rational but architecturally corrosive.

Why Code Review Alone Is Insufficient

The SDLC refinement needed to address Shadow Logic begins with recognizing that traditional code review—while essential—is insufficient as the sole guardrail. Human reviewers are optimized for catching functional defects: 'Does this code do what it should?' They are much less effective at catching architectural drift: 'Does this code do what it should in the way it should, given our established patterns?' This gap is especially pronounced when AI generates code at a velocity that exceeds the review team's capacity for deep architectural analysis.

Linter-Driven Prompting

The concept of 'Architectural Guardrails' integrated directly into agentic workflows addresses this gap. Before an AI-generated suggestion reaches a human reviewer, it can be passed through a Linter-Driven Prompt loop—a semantic analysis layer that checks the generated code against the project's established patterns. Does the new code respect the separation between data access and business logic? Does it use the project's standard error handling patterns? Does it import from the canonical utility modules rather than reimplementing functionality?

The power of this approach lies in the feedback loop. When the linter identifies a violation—say, a direct database call in a controller that should delegate to a repository layer—the violation is fed back to the AI agent as a structured error message, along with the specific architectural rule that was broken and an example of the correct pattern. The agent then regenerates its solution respecting the constraint. This iterative refinement happens before the human reviewer ever sees the code, dramatically reducing the review burden while improving architectural consistency.

DRY Violations and Dependency Creep

DRY (Don't Repeat Yourself) violations deserve special attention in the context of AI-generated code. LLMs are inherently prone to producing self-contained solutions because their training data rewards completeness. When asked to implement a feature, the model's natural inclination is to generate all the necessary code inline rather than abstracting shared logic into reusable utilities. Without explicit guardrails, this tendency leads to subtle code duplication—functions that are nearly identical but differ in minor ways, making future refactoring increasingly difficult and error-prone.

Dependency management is another area where Shadow Logic accumulates silently. AI agents may suggest importing a library to solve a problem that the project's existing utilities already handle. Each unnecessary dependency adds to the project's attack surface, increases build times, and creates a future maintenance obligation when the library releases breaking changes. A guardrail that checks proposed imports against approved dependencies—or at minimum flags any new dependency for explicit human approval—prevents this class of debt from accumulating unnoticed.

Building a Codebase That Gets Better Over Time

The organizational refinement required extends to how teams structure their AI-assisted workflows. Rather than treating AI code generation as a black box that produces solutions to be accepted or rejected, teams benefit from treating it as a collaborative process with defined stages: generation, automated validation, guided correction, and human review. Each stage reduces the burden on the next.

The long-term benefit of this discipline is a codebase that improves in coherence over time rather than degrading. Without guardrails, AI-accelerated development tends to produce codebases that grow in volume but shrink in architectural clarity. With Linter-Driven Prompting, the AI becomes a vehicle for enforcing the high standards that senior architects define but lack the bandwidth to apply manually to every pull request—gaining not just speed but an active defense against the entropy that has historically been the inevitable byproduct of rapid development.