Traditional observability in software systems is fundamentally reactive. The standard workflow follows a well-worn pattern: a user encounters an error, the monitoring system captures a log or fires an alert, an engineer investigates the incident, and the team deploys a fix. Each step in this chain introduces latency—sometimes minutes, sometimes hours, and in complex distributed systems, sometimes days. The most frustrating aspect of this reactive model is that the bug often existed in the codebase for weeks before a specific combination of inputs and environmental conditions triggered it in production.

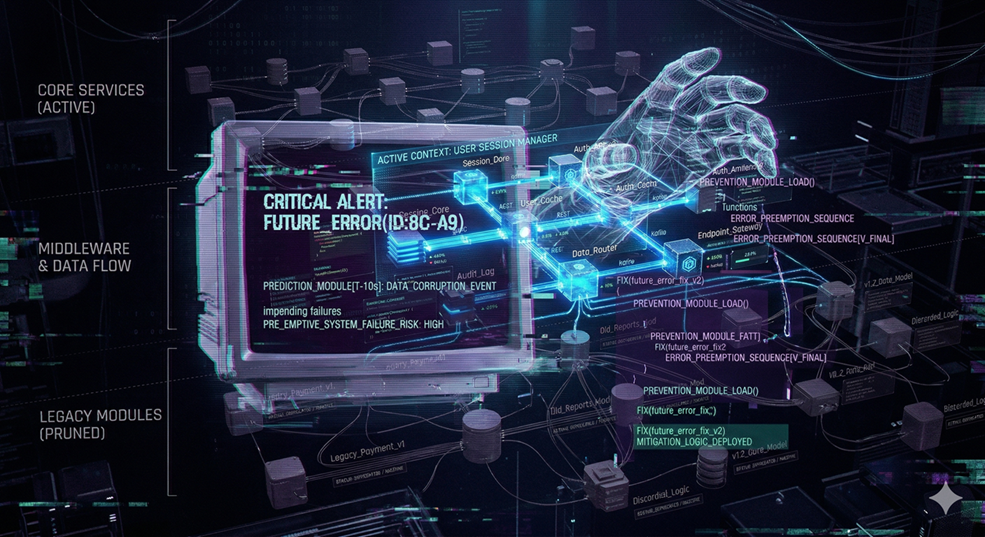

The concept of 'Synthetic Telemetry' represents a refinement to the observability phase of the SDLC that inverts this reactive model. Instead of waiting for real-world failures to generate real telemetry data, the approach uses AI agents—specifically Small Language Models (SLMs) fine-tuned on historical production data—to simulate potential failure scenarios before code reaches production. The agent analyzes a code change, considers the range of possible inputs and environmental conditions, and generates synthetic log entries and error traces that represent plausible failure modes.

The distinction between SLMs and larger foundation models is meaningful in this context. Foundation models are general-purpose reasoners optimized for breadth of knowledge. SLMs, by contrast, can be fine-tuned on a specific organization's log corpus—years of production logs, incident reports, postmortem documents, and error patterns. This specialization allows the SLM to generate failure predictions that are contextually relevant to the specific codebase and infrastructure, rather than generic failure patterns drawn from broad training data.

The SDLC refinement begins during the code review phase. When a developer opens a pull request that modifies a payment processing function, the synthetic telemetry agent analyzes the diff in the context of the function's historical behavior. It considers questions like: what happens if the external payment gateway returns a timeout? What if the retry logic encounters a race condition with a concurrent request? What if the input amount exceeds the expected numeric range? For each scenario, the agent generates a synthetic stack trace that shows exactly how the failure would manifest—transforming code review from pattern-matching into scenario exploration.

The testing phase benefits from an equally impactful refinement. Traditional testing strategies focus on validating expected behavior: given input X, the system should produce output Y. Edge case testing receives less attention because identifying meaningful edge cases requires deep domain knowledge. Synthetic telemetry agents can systematically generate edge case test scenarios based on historical failure patterns. If the organization's log history shows a pattern of failures related to timezone handling during daylight saving transitions, the agent will proactively generate test cases that exercise that boundary condition in any code that processes timestamps.

One particularly valuable application is in serverless and event-driven architectures, where traditional debugging is especially challenging. A serverless function that processes events from a queue might behave correctly for 99.9 percent of messages but fail on messages with specific characteristics—unusually large payloads, unexpected character encodings, or non-standard timestamp formats. Synthetic telemetry agents can analyze the function's code and the schema of incoming events to predict these failure modes, generating synthetic log entries that show the exact error path before any real message triggers it.

The operational refinement extends to staging and pre-production environments. Traditional staging environments attempt to mirror production, but the traffic patterns they receive are synthetic and rarely capture the full distribution of real-world usage. Synthetic telemetry agents can augment staging validation by predicting failure scenarios that would only manifest under production-like conditions—high concurrency, degraded network conditions, partial service outages—and generating the corresponding synthetic logs for the team to evaluate whether error handling and graceful degradation mechanisms respond appropriately.

The feedback loop between synthetic telemetry predictions and actual production incidents creates a continuous learning cycle. When the agent predicts a failure that subsequently occurs, its prediction model is validated. When a production failure occurs that the agent did not predict, the incident data is fed back into the model's training corpus, expanding its failure pattern vocabulary. Over time this feedback loop steadily narrows the gap between predicted and actual failure modes.

For teams considering this approach, the practical starting point does not require building a custom SLM from scratch. Several open-source frameworks support fine-tuning smaller models on custom log data, and most organizations already possess the historical log corpus needed. The return manifests as a measurable shift in the SDLC: fewer production incidents, faster code reviews with better edge case coverage, and a culture of proactive quality assurance. The observability strategy evolves from 'What happened?' to 'What might happen?'—a question that, when answered before deployment, saves exponentially more effort than the same question asked after an incident page at 3 AM.