The industry has fallen into a 'token trap.' The prevailing narrative suggests that larger context windows—now hitting the 2M+ mark—solve every challenge in code-aware AI tooling. But senior developers working on enterprise-scale repositories know a different reality: the 'Lost in the Middle' phenomenon is measurable and consequential. When you dump an entire monolithic repository into an LLM's context, the model's attention distribution flattens. It loses focus on the specific business logic buried deep in your service layer because it is distracted by thousands of lines of boilerplate UI components, auto-generated code, and configuration files.

This attention degradation creates a hidden cost across the SDLC. During the design phase, when a developer asks the AI to suggest an architectural improvement, the model's response quality degrades in direct proportion to the noise-to-signal ratio in its context. A suggestion that should reference your specific database abstraction layer instead references a generic ORM pattern because the relevant code was buried among irrelevant files. Research from multiple AI labs has confirmed that model accuracy drops significantly for information positioned in the middle of very large contexts.

Traditional vector-based Retrieval-Augmented Generation (RAG) was designed to solve this problem, but it introduces its own limitations for code repositories. Standard RAG systems chunk text into fixed-size segments, embed them into vector space, and retrieve the most semantically similar chunks for a given query. This approach works reasonably well for natural language documents but stumbles with source code because it destroys structural relationships. A function's signature chunk might be retrieved without its implementation, or a database schema definition might arrive without the migration history that explains why a column was added.

The refinement that Graph-RAG and Abstract Syntax Tree (AST) Indexing bring to this problem is architecturally significant. Instead of treating source code as flat text to be chunked and embedded, AST indexing parses the code into its syntactic structure—functions, classes, imports, dependencies, type definitions, and call hierarchies. This structural representation preserves the relationships that traditional chunking destroys. When a developer asks about a payment processing function, the retrieval system can follow the dependency graph to include the relevant database model, the configuration constants, and the error handling utilities—without including unrelated UI components that happen to live in nearby files.

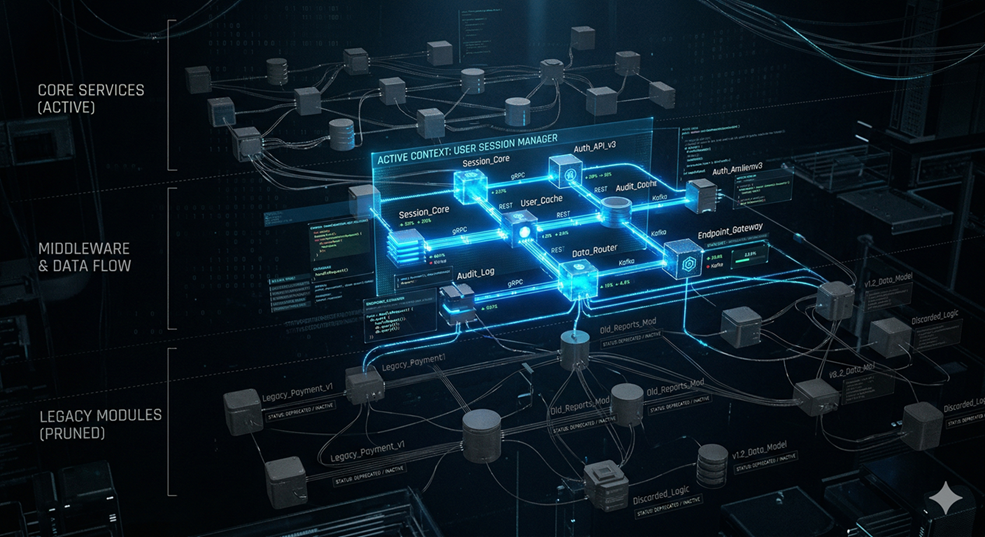

Graph-based retrieval extends this concept by building a knowledge graph of the codebase's architecture. Each node represents a meaningful code entity—a module, a class, a function, a type. Edges represent relationships: imports, function calls, inheritance, type usage, and data flow. When a query arrives, the system traverses this graph to retrieve exactly the subgraph that is relevant, pruning everything else. This 'Context Pruning' strategy ensures that the LLM receives a focused, structurally coherent snapshot rather than a disorganized pile of text chunks.

The SDLC refinement from this approach manifests most clearly during code review and refactoring. When an AI agent is asked to review a pull request, it needs to understand not just the changed files but their architectural context—how the modified function interacts with its callers, what data flows through it, and what invariants the surrounding code expects. A graph-pruned context provides this understanding efficiently, while a naive 'dump everything' approach either exceeds token limits or drowns the signal in noise.

For refactoring tasks specifically, the quality improvement can be dramatic. When the AI suggests renaming a function, moving a module, or extracting a shared utility, it needs to trace every usage across the codebase. Graph-based retrieval handles this naturally because the call graph and import graph are first-class citizens in the index. The model can confidently identify all callers, all dependents, and all test files that reference the affected code—a task that traditional RAG handles poorly because the connections between chunks are lost during embedding.

The cost implications compound across the SDLC lifecycle. Every API call to a large language model incurs a 'Token Tax'—the cost scales linearly with the number of input tokens. Organizations running AI-assisted development workflows at scale face significant inference costs. Context pruning strategies that reduce the input size by 60 to 70 percent translate directly into proportional cost savings, while simultaneously improving response quality because the model's attention is concentrated on relevant material. For teams evaluating these approaches, the key architectural decision is to move away from treating code as undifferentiated text and instead invest in structural representations that preserve the relationships and dependencies that give code its meaning.