Software Testing Life Cycle (STLC) is notoriously the bottleneck in continuous delivery pipelines. Teams often spend as much time maintaining broken Selenium or Cypress scripts as they do writing the core application features. Every UI change breaks selectors, and every API update requires a cascade of test modifications. The cumulative cost of this maintenance debt is staggering—industry surveys consistently show that QA teams allocate upwards of 40 percent of their sprint capacity to fixing flaky or broken existing tests rather than expanding meaningful coverage.

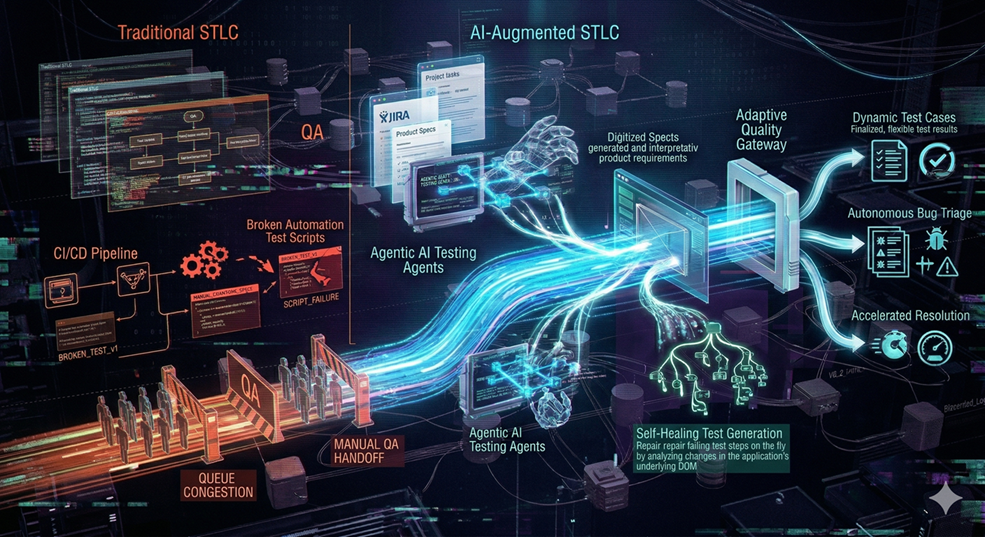

Agentic AI has the potential to transform this dynamic entirely. Instead of statically coded scripts that mirror a fixed user journey, modern AI testing agents can parse raw requirements directly from tools like JIRA, Confluence, or structured product specifications. From these inputs, they dynamically generate testing paths that reflect the actual intent behind each user story rather than a rigid sequence of click-and-assert steps.

The refinement this brings to the test design phase of the STLC is substantial. Traditional test design requires a QA engineer to read a requirement, manually identify test scenarios, write step-by-step test cases, and then translate those cases into automation code. Each of those handoffs introduces interpretation risk—what the product owner intended, what the QA engineer understood, and what the automation script actually validates can diverge significantly. An AI agent that reads the requirement directly and generates test scenarios from it compresses these handoffs into a single, traceable step.

When an application changes visually or structurally, a self-healing testing agent does not simply fail and halt the pipeline. It analyzes the current DOM structure, identifies new element trajectories that correspond to the original test intent, updates the testing pathway autonomously, and reports the anomaly for human review. This distinction matters because in traditional automation, a single renamed CSS class can cascade into dozens of test failures, each requiring manual investigation to determine whether the failure represents a genuine defect or merely an outdated locator.

The impact on defect triage—one of the most time-consuming phases of the STLC—is where AI-augmented testing delivers its sharpest refinement. When a traditional test fails, a QA engineer must reproduce the issue, capture evidence, classify the severity, identify the probable root cause, and write a bug report. AI agents can perform all of these steps autonomously: capturing screenshots and network traces at the point of failure, correlating the failure with recent code changes, and generating a preliminary bug report that includes the suspected commit, the affected user flow, and a recommended severity level.

Beyond individual test execution, AI-augmented STLC refines the strategic question of test coverage. Traditional coverage metrics—line coverage, branch coverage, path coverage—are useful but blunt instruments. They tell you that a line of code was executed, not that a meaningful business scenario was validated. AI agents can analyze application behavior holistically, identifying coverage gaps not in terms of code lines but in terms of user journeys that remain untested—surfacing, for instance, that a critical checkout flow has no test coverage for the scenario where a discount code is applied to a subscription product.

The regression testing phase benefits from a particularly meaningful refinement. Traditional regression suites grow monotonically—new tests are added but old tests are rarely pruned, leading to ever-longer execution times and increasingly fragile pipelines. An AI-augmented approach continuously evaluates which tests are redundant, which tests are consistently stable and can be deprioritized, and which tests cover high-risk areas that warrant more frequent execution. This intelligent test suite management keeps the pipeline fast without sacrificing confidence.

For organizations at the beginning of this transition, the practical path forward is not to discard existing test infrastructure overnight. Instead, the refinement lies in layering AI capabilities on top of existing frameworks. Existing Cypress or Playwright tests can be augmented with self-healing locator strategies. Manual test case libraries can serve as training data for automated scenario generation. By adopting an AI-Augmented STLC, teams move away from standard regression gridlock into a fluid, adaptive quality gateway—where test infrastructure not only executes tests but also triages defects, identifies coverage gaps, and accelerates resolution times across every sprint.